NVIDIA GTC 2026: The Inference Inflection and the Rise of Agentic AI Factories

Key Highlights

- The Inference Inflection: GTC keynote explained massive shift in compute demand as AI moves from pre-training to real-time agentic inference.

- The NVIDIA Vera Rubin Platform: A "generational leap" featuring seven new chips (including NVIDIA Vera CPU and NVIDIA Rubin GPU) and five specialized racks designed to power the world’s largest AI factories.

- Disaggregated Inference: The newly integrated NVIDIA Groq 3 LPX rack unites with the Vera Rubin platform to deliver up to 35x higher inference throughput per megawatt.

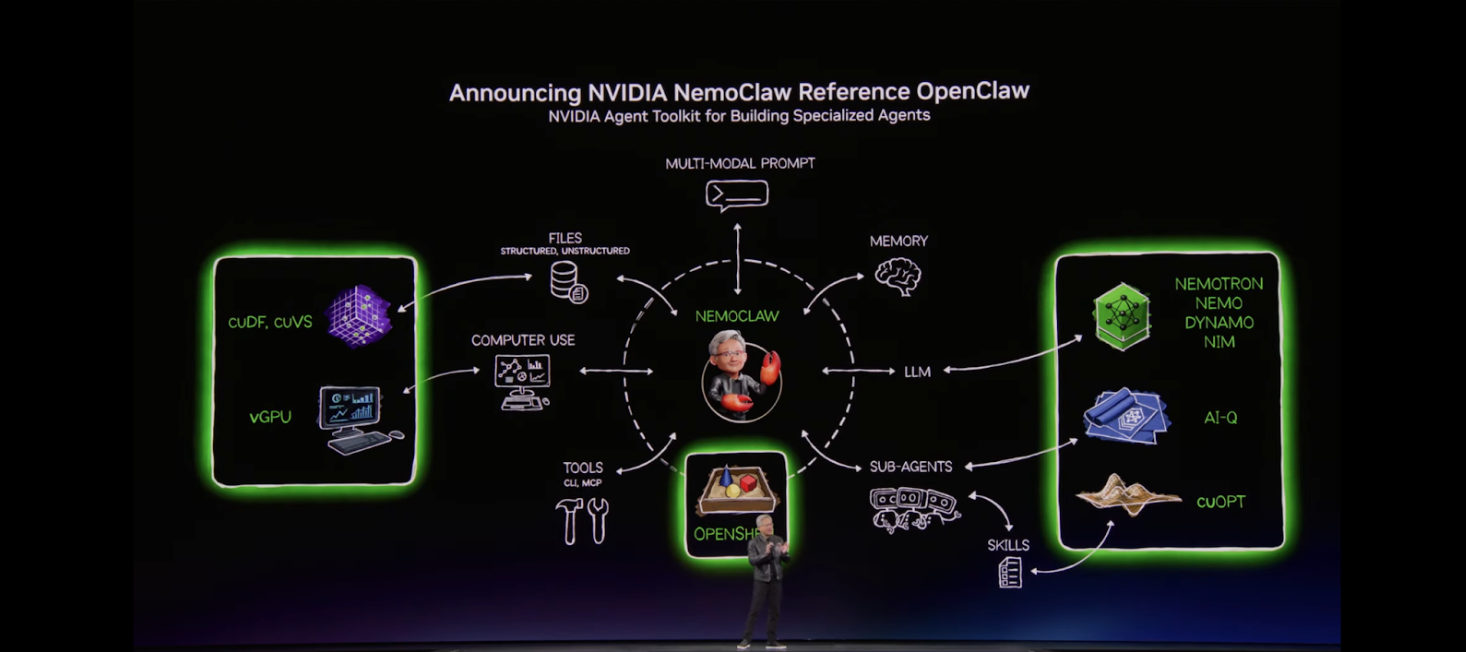

The "Moment of Claws": NVIDIA is driving the next AI inflection point through NVIDIA OpenShell, an open-source runtime that enables autonomous agents to reason and act safely within isolated sandboxes.

The $1 Trillion Inference Inflection

At GTC 2026, the keynote underscored the massive shift from pre-training models to real-time execution, Huang revealed a staggering jump in the demand for AI infrastructure

The doubling of demand is driven by the era of agentic AI—systems that plan tasks, run tools, and validate results. Computing is no longer retrieval-based; it is growing from generative, to reasoning-oriented and finally agentic. Now, as AI Agents begin to perform different tasks through iterative processes of planning, breaking down tasks, reasoning and reflecting, the burst of inference compute demand has grown by roughly 1,000,000x in just two years.

Vera Rubin: The Foundation of the AI Factory

NVIDIA launched the Vera Rubin platform, a supercomputer where multiple racks work together as one massive, coherent system to maximize tokens per watt.

- NVIDIA Groq 3 LPX Rack: Marks a milestone in accelerated computing by enabling disaggregated inference. While the Rubin GPU handles the prefill, decode attention, and generating massive KV cache, the NVIDIA Groq LPU manages the low-latency decode phase, providing up to 10x more revenue opportunity for trillion-parameter models.

- Vera CPU Rack: The world’s first processor purpose-built for agentic AI. It delivers results twice as efficiently and 50% faster than traditional CPUs. With 88 custom Olympus cores and 1.5TB of LPDDR5X memory, it provides the single-threaded performance required for agents to run code and validate results.

- NVIDIA BlueField-4 STX Rack: An AI-native storage infrastructure that treats data as "Context Memory." Using the NVIDIA DOCA Memos™ framework, it boosts inference throughput by up to 5x for long-context reasoning

NVIDIA OpenShell: Enabling the "Moment of Claws"

In early 2026, the emergence of OpenClaw drew attention to the demand for autonomous agents capable of executing multi-step workflows. By enabling agents to interact with local files and external tools, frameworks like OpenClaw illustrated a shift toward persistent, execution-oriented systems.

To support the safe deployment of these SOTA agents, NVIDIA is celebrating the “moment of claws” with NVIDIA OpenShell, an open-source runtime designed to govern how agents execute.

We’re also working with NVIDIA on NVIDIA NemoClaw — an open source stack that simplifies running OpenClaw always-on assistants, more safely, with a single command. As part of the NVIDIA Agent Toolkit, it installs the NVIDIA OpenShell runtime—a secure environment for running autonomous agents, and open source models like NVIDIA Nemotron.

NVIDIA OpenShell sits between the agent and the infrastructure, running any coding agent—OpenClaw, Claude Code, Cursor, or Codex—in an isolated sandbox with zero code changes. Every action is policy-enforced at the infrastructure layer, ensuring privacy and security for long-running agentic workflows.

Source: NVIDIA GTC 2026 Keynote

In tandem with these global advancements, Bitdeer AI is leading the charge for secure agentic infrastructure in the Asia Pacific region. Developers and enterprises can now find Bitdeer AI featured on build.nvidia.com as a provider for the infrastructure necessary to run the underlying "brains" of these agents.

We are also proud to highlight that Nemotron 3 Super is available on our platform. As the best-performing open model for OpenClaw, Nemotron 3 Super provides the high-level reasoning and planning capabilities required to fuel complex autonomous workflows.

Start building your own safe autonomous agent today directly from build.nvidia.com:

- Access NVIDIA OpenShell on build.nvidia.com.

- Seamlessly integrate frontier open model endpoints from Bitdeer AI to power your long-running agents.

Powered by NVIDIA GB200 NVL72 systems deployed in our state-of-the-art AI data centers, Bitdeer AI Cloud delivers the high-performance infrastructure required to run autonomous, self-evolving agents in NVIDIA OpenShell at scale. By combining secure runtime governance with high-performance AI cloud, we empower developers to build the next generation of AI with stronger privacy and infrastructure reliability