Bring MultiModal Reasoning to Production with NVIDIA Nemotron 3 Nano Omni on Bitdeer AI Cloud

As AI agents evolve beyond text and into real-world workflows, the ability to understand information and reason across multiple modalities is becoming essential. From video and audio to documents and UI screens, modern agentic systems require models that can reason across modalities with accuracy, efficiency, and enterprise readiness.

Today, we’re delighted to announce that NVIDIA Nemotron™ 3 Nano Omni is available at launch on our Bitdeer AI Model Studio. As part of the broader NVIDIA Nemotron family, it represents a new step forward in open, production-ready multimodal reasoning models.

What is NVIDIA Nemotron 3 Nano Omni

NVIDIA Nemotron 3 Nano Omni is an open multimodal foundation model designed for production agentic AI. It unifies reasoning across video, audio, images, documents, charts, and text in a single model—eliminating the need for fragmented multimodal pipelines.

By consolidating perception and reasoning into one system, Nemotron 3 Nano Omni simplifies agent development, reduces orchestration complexity, improves efficiency and scalability, and delivers leading multimodal accuracy. Built with open weights, datasets, and recipes, it enables developers and enterprises to customize, deploy, and operate multimodal agents with full control and flexibility.

Key Specifications:

● Model Size: 30B A3B

● Modalities: Input (text, image, video, audio), Output (text).

● Architecture: Hybrid Mixture of Experts (MoE) with Transformer-Mamba design

● VIsion Encoder: CRADIO v4-H

● Audio ENcoder: Parakeet

● Context Length: Up to 1M tokens

● Optimizations:

- Conv3D fortemporal video reasoning

- Efficient Video Sampling (EVS) for lower inference cost

● Quantization: FP8, NVFP4

Why a Unified Multimodal Model Matters

Many enterprise AI systems still rely on stitched-together pipelines across vision, speech, OCR, and reasoning models. This approach introduces higher latency from repeated inference passes, increased operational complexity, and fragmented context across modalities.

Nemotron 3 Nano Omni addresses this by acting as a multimodal perception and reasoning layer within agent systems—enabling a unified perception → reasoning → action loop.

Enterprise use cases

Customer Service Agent: A customer service agent operates in a highly multimodal environment. It analyzes recorded customer interactions, including audio and speech transcriptions. It reasons over screen recordings of customer sessions and images such as screenshots of errors or invoices. At the same time, it reads documents like knowledge‑base articles, policies, and CRM history. Nemotron 3 Nano Omni unifies all of these signals so the agent understands not just what the customer said, but what they experienced and what the business rules allow—enabling accurate, context‑aware resolution.

Financial Analyst Agent: Financial analysis depends on more than text alone. This agent reasons across documents like financial filings and earnings transcripts, images such as charts and scanned reports, audio and speech from earnings calls, and video from investor presentations. Nemotron 3 Nano Omni ties together what executives say, how numbers are presented visually, and what the underlying documents show—producing grounded insights rather than surface‑level summaries.

Computer Use Agent: The computer use agent is one of the clearest demonstrations of unified multimodality. It analyzes video and images from screen recordings to understand UI state over time, interprets instructions and system audio cues, and reads documents like task instructions and validation policies. Nemotron 3 Nano Omni enables the agent to see the interface, understand intent, read constraints, and take the correct action—all within one reasoning loop. This collapses when perception and decision‑making are split across models.

Media and Entertainment Agent: Media workflows depend on more than transcripts alone. This agent reasons across video content, dialogue, on-screen text, and visual scene changes to support richer video and speech analysis. Nemotron 3 Nano Omni can generate dense captions that capture not just what is said, but what appears and happens on screen, while also improving video search and summarization across large content libraries. This helps media teams turn raw footage into searchable, contextualized, and production-ready assets more efficiently.

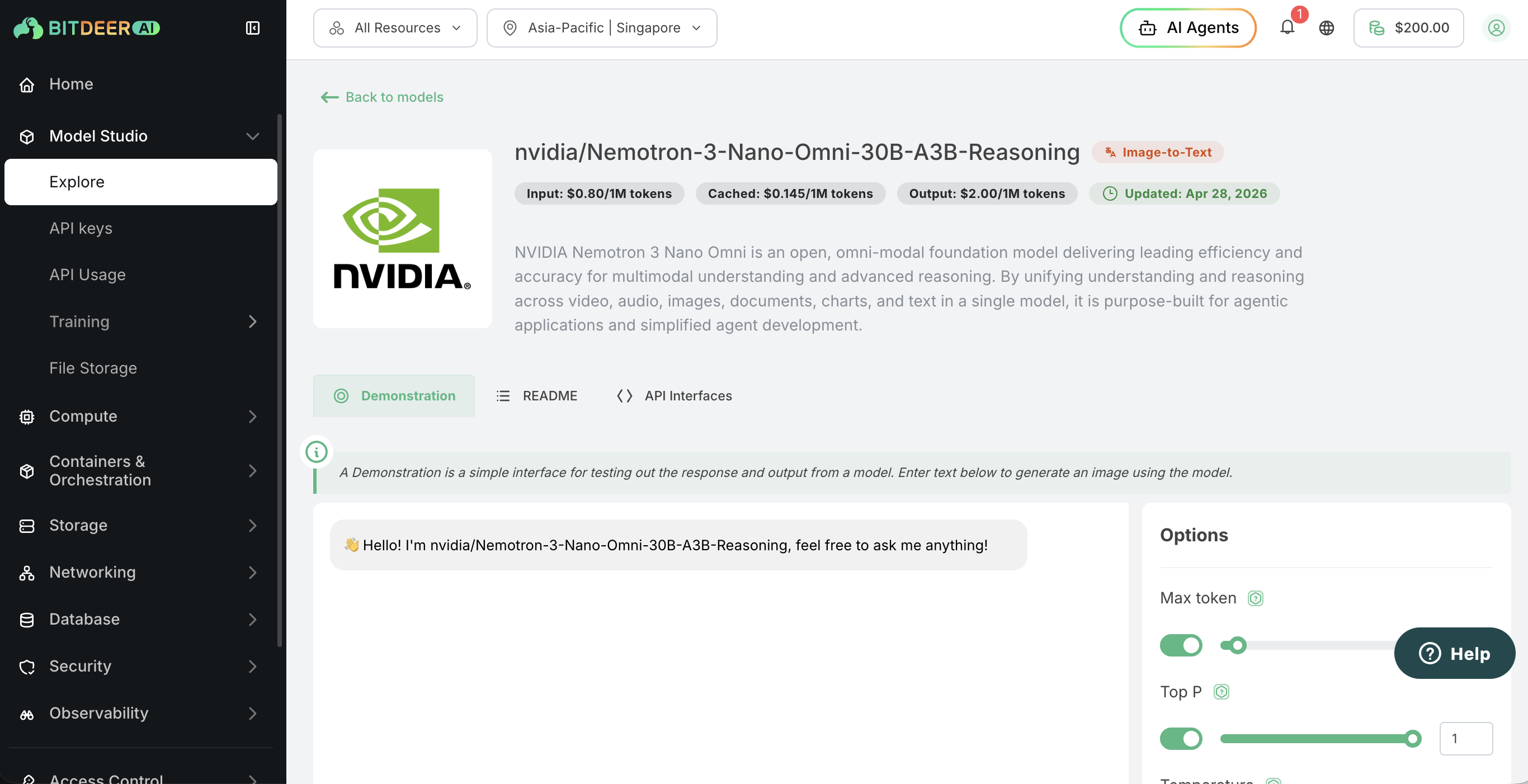

Run Nemotron 3 Nano Omni Via API on Bitdeer AI Model Studio

You can run Nemotron 3 Nano Omni on Bitdeer AI Model Studio, our serverless inference platform designed to make access to advanced foundation models simple and scalable. With a straightforward API, Model Studio allows developers and enterprises to start using models quickly without managing underlying infrastructure, reducing deployment complexity and time to value.

This makes it easier to integrate multimodal reasoning capabilities into applications and agentic workflows, while benefiting from a more flexible and efficient path from experimentation to production in just a few steps.

Get Started

- Log in to Bitdeer AI Model Studio

- Locate Nemotron 3 Nano Omni in the model list

- Generate API key and start making API calls

This streamlined workflow enables rapid integration of multimodal reasoning into applications and agent systems.

Conclusion

NVIDIA Nemotron 3 Nano Omni makes multimodal AI more practical for real-world deployments. Instead of managing multiple models and pipelines, teams can focus on building agentic applications, automating workflows, and delivering better user experiences.

With availability on Bitdeer AI Model Studio, organizations can move from experiment to production faster—turning multimodal AI into measurable business impact.